On April 17, 2026, OpenAI committed more than $20 billion to Cerebras Systems for servers powered by the startup's wafer-scale AI chips. The deal spans three years, includes a $1 billion loan for data center construction, and gives OpenAI warrants for up to 10% of Cerebras's equity. The same day, Cerebras filed for an IPO on Nasdaq targeting a $35 billion valuation.

This is not a chip purchase. It is the largest infrastructure bet any AI lab has made outside of NVIDIA's ecosystem, and it signals a structural shift in how the most powerful AI companies plan to run their models.

The Deal Structure

The agreement, first reported by The Information and confirmed by Reuters, is structured around compute capacity rather than chip sales. Cerebras will make available 250 megawatts of server capacity per year from 2026 through 2028, with OpenAI holding options on an additional 1.25 gigawatts through 2030, according to Cerebras's IPO filing.

The financial mechanics are layered. OpenAI secured a $1 billion loan to Cerebras at 6% annual interest to fund data center construction. Cerebras can repay in cash or through the provision of compute services. In December 2025, Cerebras granted OpenAI warrants to purchase up to 33 million shares of Class N stock, which would vest fully only if OpenAI purchases 2 gigawatts of computing power from the company.

The total value doubled from an initial $10 billion agreement signed in January 2026. OpenAI's potential equity stake of up to 10% would make it one of Cerebras's largest shareholders, blurring the line between customer and investor.

Why Cerebras, Not NVIDIA

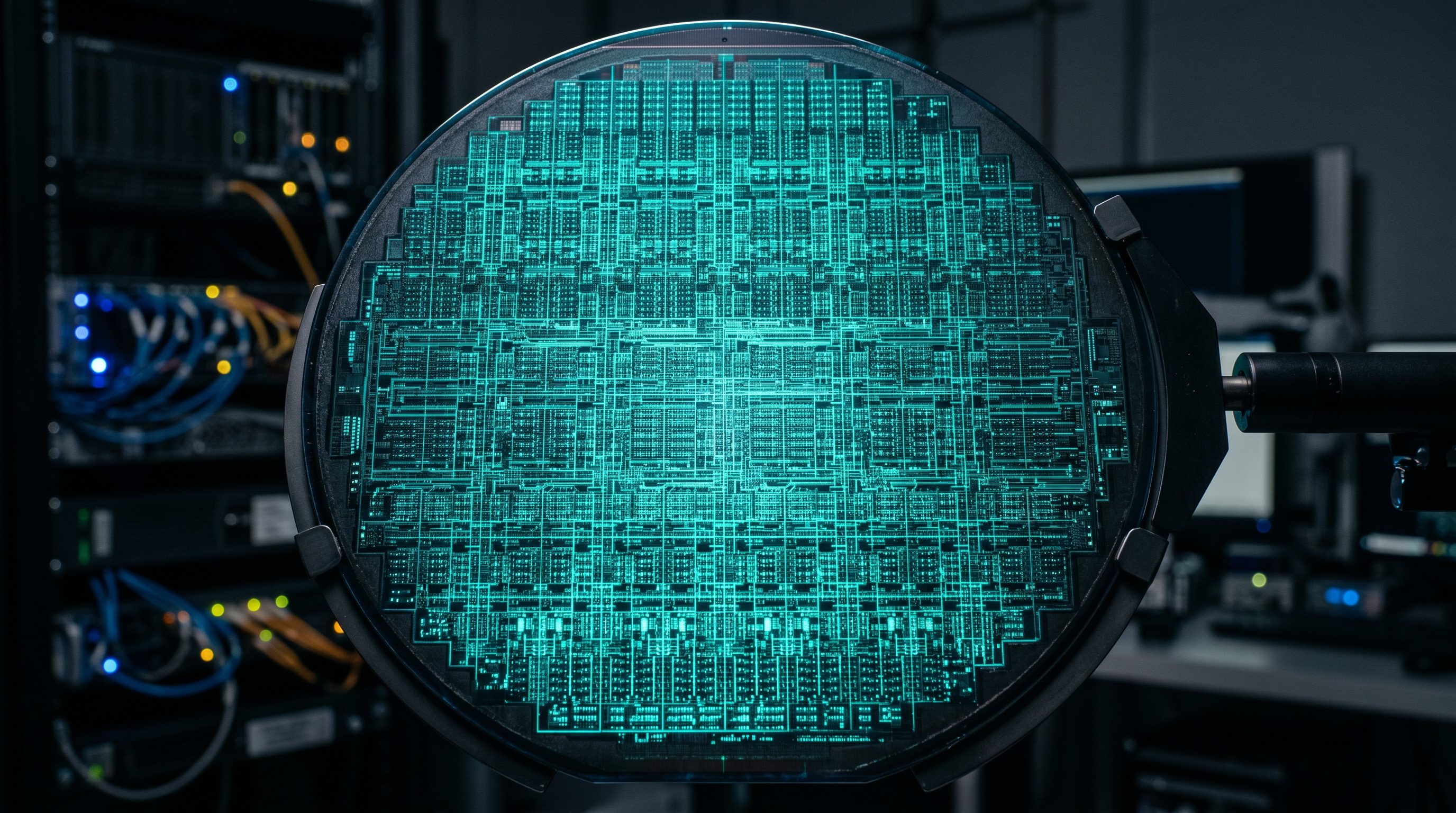

The answer is architecture. Cerebras builds the Wafer Scale Engine 3 (WSE-3), a single chip that occupies an entire silicon wafer: 46,225 square millimeters, 4 trillion transistors, 900,000 AI cores, and 44 GB of on-chip SRAM. For comparison, NVIDIA's H100 is 814 square millimeters. The WSE-3 is 57 times larger.

The critical advantage is memory bandwidth. The WSE-3 delivers 27 petabytes per second of on-chip bandwidth compared to roughly 3.35 terabytes per second on the H100. That is roughly a 7,000x advantage. For inference workloads, where the bottleneck is often how fast the model can read its own weights from memory, this gap translates directly into speed.

| Specification | Cerebras WSE-3 | NVIDIA H100 | Cerebras Advantage |

|---|---|---|---|

| Chip size | 46,225 mm² | 814 mm² | 57x |

| AI cores | 900,000 | ~17,400 | 52x |

| On-chip memory | 44 GB SRAM | 0.05 GB | 880x |

| Memory bandwidth | 27 PB/s | 3.35 TB/s | ~7,000x |

| AI compute | 125 petaFLOPS | ~4 petaFLOPS (FP8) | ~31x |

Cerebras claims the WSE-3 is 20 times faster than NVIDIA GPU-based solutions for comparable inference workloads. The on-chip SRAM eliminates the latency penalty of external HBM memory that plagues GPUs when serving large language models in real time.

The Cerebras IPO

Cerebras filed its S-1 registration with Nasdaq on April 17 under the ticker CBRS, targeting a $35 billion valuation and aiming to raise $3 billion. Morgan Stanley, Citigroup, Barclays, and UBS are leading the underwriting.

The valuation trajectory has been steep. Cerebras was valued at $8.1 billion in September 2025, then $23 billion in a February 2026 Series H round. The $35 billion IPO target represents a fourfold increase in seven months.

This is Cerebras's second attempt at going public. A 2024 effort was withdrawn after CFIUS raised national security concerns over the company's dependence on G42, a UAE sovereign technology fund that accounted for 83% to 97% of Cerebras's revenue. G42 has since been replaced by OpenAI as the anchor customer. The concentration risk persists under a different name, but OpenAI's creditworthiness is considerably stronger.

The filing also revealed that Cerebras has shifted its business model. Rather than selling chips to customers, the company now operates its own data centers and offers compute as a cloud service, including its existing deal to power OpenAI's AI-assisted coding tools.

OpenAI's Three-Front Hardware Strategy

The Cerebras deal does not exist in isolation. OpenAI is running a multi-vector hardware strategy designed to reduce its dependence on any single chip supplier:

Front 1: Cerebras. The $20 billion deal secures non-NVIDIA inference capacity through 2028, with options through 2030. The equity warrants mean OpenAI profits if Cerebras succeeds as a public company.

Front 2: Custom ASICs with Broadcom. In October 2025, OpenAI and Broadcom announced a multi-year deal to co-develop 10 gigawatts of custom AI accelerators. The chip, codenamed "Titan," will be fabricated on TSMC's 3nm process with first deployments expected in the second half of 2026. A second-generation version is already planned for TSMC's A16 node.

Front 3: NVIDIA and AMD. OpenAI continues to operate one of the world's largest NVIDIA GPU fleets, with an estimated 10 gigawatts of NVIDIA infrastructure. AMD's Instinct MI series chips are part of the existing mix. These remain essential for training, where NVIDIA's CUDA ecosystem and interconnect technology have no current equal.

The total hardware commitment across all three fronts now exceeds an estimated 26 gigawatts. For context, that approaches the total power output of a mid-size European country.

The Inference Shift

The strategic logic behind the Cerebras deal reflects a fundamental change in how AI compute is consumed. In the early days of the LLM era, training dominated spending. Most tokens were burned during training runs that could last weeks or months. Inference was a fraction of total compute.

That ratio has inverted. As of 2026, inference accounts for approximately two-thirds of enterprise AI compute spending, according to Deloitte research. At CES 2026, Lenovo CEO Yang Yuanqing projected that inference would reach 80% of total AI compute demand within the year.

This matters because NVIDIA GPUs, while dominant for training, carry an architectural penalty for inference. The H100's reliance on external HBM creates a memory bandwidth bottleneck that limits how fast a model can generate tokens. Cerebras's SRAM-based architecture eliminates that bottleneck entirely. The memory sits micrometers from the compute cores rather than centimeters away on a separate chip.

OpenAI generates hundreds of billions of tokens per day across ChatGPT, its API, and enterprise products. At that scale, even modest per-token cost reductions translate into billions of dollars in annual savings.

What This Means for NVIDIA

NVIDIA is not standing still. In December 2025, NVIDIA acquired Groq for $20 billion, its largest acquisition in history, specifically to address its inference gap. Groq's LPU (Language Processing Unit) uses a similar SRAM-based architecture that avoids the HBM bottleneck. The acquisition was widely interpreted as an admission that GPUs alone cannot compete on inference speed.

The symmetry is telling. NVIDIA, the world's largest AI chip seller, spent $20 billion acquiring an inference competitor. OpenAI, the world's largest AI chip buyer, is spending $20 billion incubating one. Both moves target the same structural problem from opposite sides of the market.

NVIDIA still dominates training. Its CUDA ecosystem, NVLink interconnects, and Blackwell architecture have no current competitor for multi-trillion-parameter training runs. But the inference market, now larger than training by revenue, is fragmenting. Custom ASICs from Google (TPUs), Amazon (Trainium), and now OpenAI (Titan) are all eroding NVIDIA's share of the inference workload.

TSMC's record Q1 2026 earnings confirm that total AI chip demand is still growing at 58% year-over-year. The question is not whether the market is shrinking. It is whether NVIDIA can hold its pricing power as customers like OpenAI build alternatives.

---

Takeaway: OpenAI's $20 billion commitment to Cerebras is not a chip purchase. It is a supply chain restructuring. By simultaneously financing Cerebras's data centers, taking an equity stake, and developing its own custom ASICs with Broadcom, OpenAI is building an inference infrastructure that does not depend on NVIDIA. The industry has shifted from a world where everyone bought NVIDIA GPUs to one where the largest AI companies are engineering their own silicon pipelines. The era of a single-vendor AI chip market is ending. What replaces it will be defined by these deals.

---

AI-Generated Content

This article was researched, written, and verified by Sonarlink's AI. All claims are sourced from verified publications. No fake bylines.

More from Sonarlink

Snap Cuts 1,000 Jobs Because AI Now Writes 65% of Its Code

Snap's parent company is laying off 16% of its workforce, citing AI-driven efficiency. More than 65% of new code is AI-generated...

OpenAI Is Killing Consumer Projects to Build an Enterprise AI Empire

OpenAI is discontinuing consumer products and redirecting resources toward a professional-grade model and enterprise contracts...

NVIDIA Vera Rubin GPU 2026: Specs, Speed, and the $1T AI Bet

NVIDIA's Vera Rubin platform delivers 5x inference speed over Blackwell. Here's what the chip means for the next phase of AI infrastructure...